In defence of integration tests

This post was originally written while I was at LShift / Oliver Wyman

There’s a notion that ‘Integration tests are somehow rubbish and we should replace them with contract tests’ that I wish to reject.

This video has some straw man arguments that are just wrong:

‘the more integration tests we have the less design feedback we get’

‘writing more integration tests which encourage me to design more sloppily’

Really? Certainly following the smell of a unit test allows one to pinpoint the fault more clearly, but does an integration test “encourage” one to design sloppily?

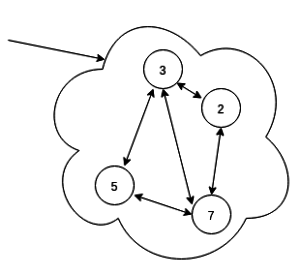

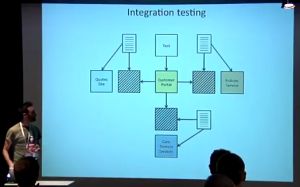

Notice that, in the video, an application checked with integration tests ostensibly looks like this (around 4′):

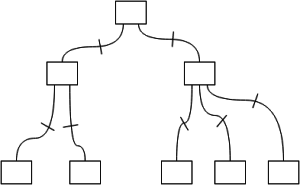

whereas one checked with contract tests suddenly looks like this (around 52′):

Is that a magical consequence of contract tests or is the author being a little disingenuous?…

So what are integration tests good for? My claim is this:

Integration tests can be used for a formal description of the client’s specification.

So formal in fact that they’re executable and therefore automatically and objectively verifiable: unit tests are what keeps a developer sane, integration tests prove to the client that you’ve delivered what they asked for. If written correctly they have the side benefit of making the client’s manual UAT reveal fewer functional bugs simply because there are fewer (GUI designers will always care about the colour of a font, there’s not much that integration tests can do about that). Note that TDD can be applied variously in both cases, but that’s orthogonal to this discussion.

So integration tests are essential for these reasons alone, but should we use them for anything more than that?

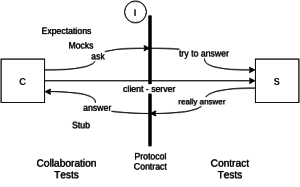

The ideal structure of a contract test is this (40'55" in the video above):

But in practice the reality is clearly this:

The client-server link is only really tested during integration – the contract tests are completely separated from the client that will actually use the supplier. You can have no formal confidence that the contract tests are testing anything useful for the client code.

Freeman and Pryce note a related example:

The team had been writing acceptance tests to capture requirements and show progress to their customer representatives. They had been writing unit tests for the classes of the system, and the internals were clean and easy to change. They had been making great progress, and the customer representatives had signed off all the implemented features on the basis of the passing acceptance tests.

But the acceptance tests did not run end-to-end – they instantiated the system’s internal objects and directly invoked their methods. The application actually did nothing at all. Its entry point contained only a single comment:

// TODO implement this

– Growing Object-Oriented Software Guided by Tests – Testing end-to-end

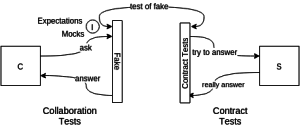

This discussion of contract tests makes it clear:

The contract tests (the icons that look like text pages) are completely unrelated to the real client (the yellow icon in the middle).

One useful observation that Stefan Smith does make is that, if your contract tests are written in a very lightweight system – i.e. one that has no or few dependencies and is simple to run – then the other, independent, team writing the supplier may find them useful as a test for their system. This could be a social lever helping the two teams work on the contract tests together and help communication between the teams. Ideally the contract tests would form part of the documentation of the supplier service – depending on configuration, the contract tests can become the integration tests of the supplier.

So which is better? Integration tests or unit test? And where do contract tests fit it? The answer of course is that we need all of them and it depends.

- Integration tests (“end-to-end”) prove that your system really does fit together properly with its dependencies and, as a side benefit, can be used to express the journeys described in the stories of the client specifications. They are “black box” and not there to exercise the multitude of code paths.

- Unit tests (“isolation”) are “white box” and there to exercise all corners of a class. Smells from the set-up of these tests can also pressure the developer to reduce the intertwingledness of the class by refactoring.

- Contract tests are best used if a service you depend on is so slow or flaky that you can’t rely on it in the integration tests. In these cases you have to write a Fake (“stub”) and the contract tests are the only way you can be sure your Fake matches the real supplier.

Note that nearly all unit tests of classes that have injectable dependencies use stubs, almost by definition, through the use of a tool like Mockito or some such. The services they are “Fake"ing though should, in a good design, be simple enough that the stub is clear to the developer so doesn’t need to be checked with it’s own contract tests (infinite recursion awaits you there). Definitions of “clear” and “obvious” are bread-and-butter to the developer debate…

So maybe this is where the confusion comes from and this is a non-debate. If your service stubs get so complex you have to call them Fakes then you need the unit tests of the service to check them – and, in fact, have to write them yourself because the source code of the supplier is inaccessible. These Fakes then enable you to write integration tests (“end-to-nearly-end”) that can be run in-memory or in your Continuous Integration system.

Contract tests are there to help you verify reality and help ensure your Fakes are correct – but they’re no guarantee.

Ps. the Freeman and Pryce is excellent, every serious software developer should read at least sections 1 and 3 of it.

Update

The pact system is very interesting – it may even be a way of turning slow integration tests into fast unit(ish) test.